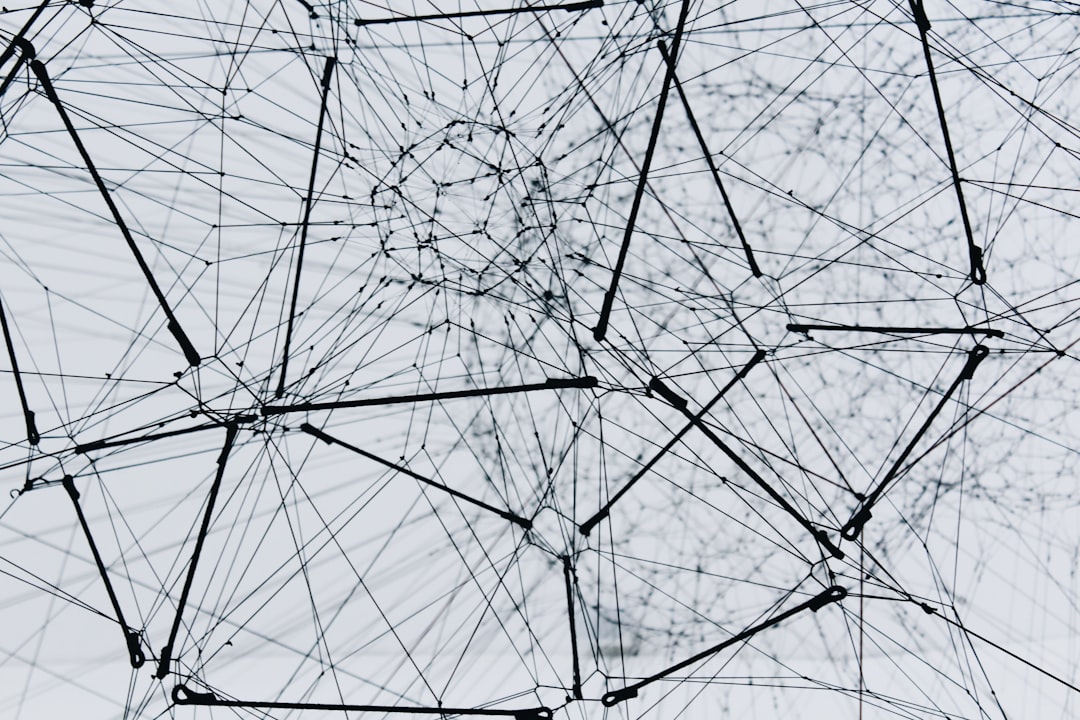

Neural networks, also called artificial neural networks (ANNs) or simulated neural networks (SNNs), are a subset of machine learning and the core of deep-learning algorithms. Neural networks are designed like the human brain to mimic the way that biological neurons signal each other. Artificial neural networks are made up of node layers that contain an input layer, one or more hidden layers, and an output layer. Each node, or artificial neuron, connects to another node and has an associated weight and threshold. When the output of any individual node is higher than the specified threshold value, the node is activated and sends data to the next layer of the network. Without this activation, no data is passed along to the next layer.

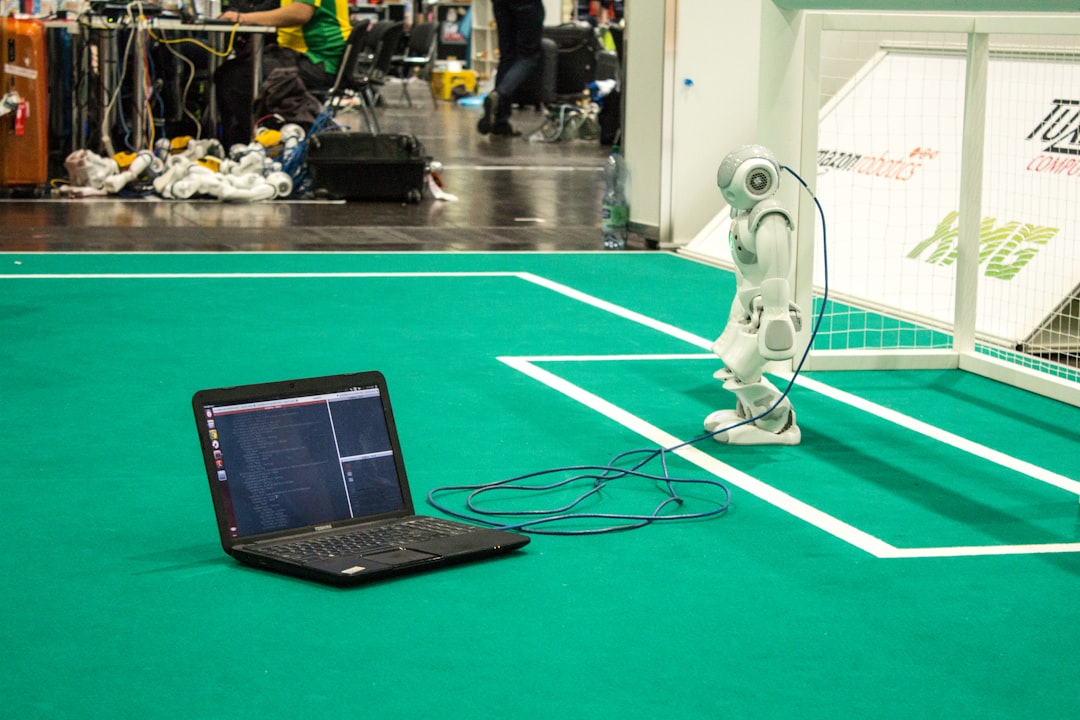

Neural networks learn and improve their accuracy through the use of training data. Finely-tuned learning algorithms are powerful tools in computer science and artificial intelligence, helping to classify and cluster data at high speeds. Simple tasks in speech recognition or image recognition can be completed in minutes rather than hours when human experts intervene. Take a deeper look at what an AI neural network is, how it works, and why it’s important.

How Neural Networks Work

An artificial neural network has three layers of neurons that allow for the flow of information from one layer to the next, much like the human brain. The input layer is the data entry point into the system, the hidden layer is where information is processed, and the output layer is where the system decides how to proceed based on the data. The more complex the artificial neural network, the more layers there will be. The neural network functions as a collection of nodes, like artificial neurons, and loosely models the neuron network in animal brains. An artificial neuron receives a stimulus signal, processes it, and signals other connected neurons.

The Inner Workings of an Artificial Neural Network

In an AI neural network, the artificial neuron receives a stimulus via a signal that’s a real number. Once this stimulus is received, the output of each neuron is computed by a nonlinear function of the sum of its inputs. Neurons and the connections between them called edges both have a weight that adjusts and adapts as learning continues. The weight increases or decreases the strength of the signal at a connection. Neurons have a threshold that must be crossed to forward an aggregate signal.

Neurons are aggregated into a number of layers that perform different modifications on their inputs. Signals travel from the first layer, or input layer, to the last layer, or output layer. Neural networks contain some type of learning rule that modifies the weights or the neural connections according to the input patterns presented.

The Benefits of Neural Networks

Neural networks and deep learning are often used interchangeably despite being distinct from each other. They are closely connected and depend on each other to function. Deep learning forms the cutting edge of artificial intelligence (AI) and differs from machine learning. Deep learning allows a computer to continually train itself to process data, learn from it, and build new capabilities. Complex neural networks contain multiple hidden layers in addition to an input and output layer and are consequently called deep neural networks that are conducive to deep learning.

Neural networks use unsupervised learning to self-train in the instance of problems where the relationships are dynamic or nonlinear. This learning process is enhanced when the internal data patterns are strong, depending on the application. Neural networks are an analytical alternative to standard techniques but are slightly limited to ideas like strict assumptions of linearity, normality, and variable independence. A neural network’s ability to examine various relationships makes it easier and faster to model phenomena that otherwise would have been difficult or impossible to comprehend.